In vRealize Automation 8.x we have out of the box integration for Ansible and Ansible Tower directly build in in to a Cloud Templates designer. Still there is a way to run this integration as a part of EBS subscription. This way you will be able to detect any single Job failure and decide if you want to proceed with deployment or do you want to scrap it. Ability to run Ansible tower jobs with a REST call is also beneficial it you want to design a 2-day operation on a VM around existing Ansible automation.

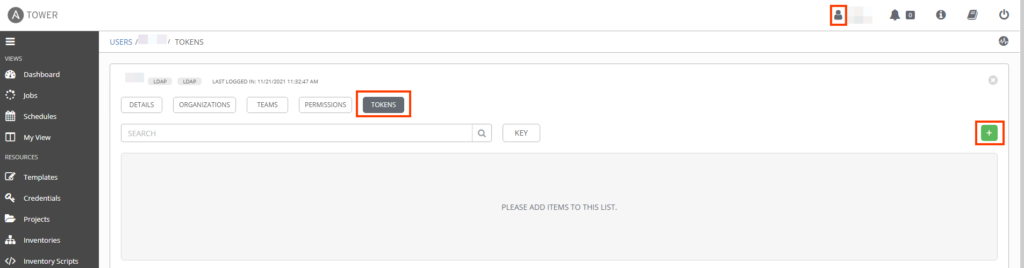

So how can we achieve this goal? Lets start with ansible configuration. You will need a access token for Ansible Tower. Go to your ansible click your user settings then “tokens” and small plus sign on the right.

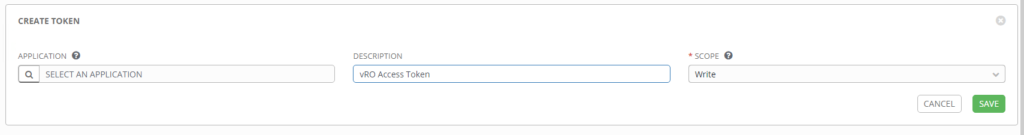

Now leave application empty, give your token a meaningful description change the scope to Write and click save. Remember to save the token value somewhere. I’m keeping it in my vRealize Orchestrator as a SecureString config element.

Now back to vRO. Create new Workflow called “Run Ansible Job” we will need few inputs for this workflow:

- jobName: <String > – Ansible Tower Job name to run

- inventoryName : <String > – Ansible Tower inventory containing host where job will be run

- hostname: <String > – machine hostname

And some Attributes:

- restHost: <REST:RESTHost> – ansible REST endpoint

- token: <String> – Ansible Tower Authorization token generated before

- repNumber: <Number > – number of repeats for execution loop

- sleepTime: <Number > – time to sleep between executions in sec

The workflow will first seek for inventory by name and gets the inventory id, then this same operation will happen to job. Once we have inventory and job id we can build JSON object that will be send with a POST request to start the job. At this point I give Ansible few seconds to start the playbook and then we wait in the loop checking if job is finished and what is the job status and if it failed. Now it depends on you what you do then. I have a white list of jobs that can fail and deployment will go forward, but if something important fails that we do not want to fix later I’m failing the whole deployment.

//Get inventory id by name

System.debug("/Get inventory id by name...");

var operationUrl = "/api/v2/inventories?search=" + inventoryName;

var response = makeRequest("GET", operationUrl, null);

var inventoryId = response.results[0].id;

System.debug("inventoryId: " + inventoryId);

//Get job id by name

System.debug("Get Job Id by name...");

var operationUrl = "/api/v2/job_templates?name=" + jobName;

var response = makeRequest("GET", operationUrl, null);

var jobId = response.results[0].id;

System.debug("inventoryId: " + inventoryId);

//build JSON

jsonObj = JSON.parse('{"inventory": 0,"limit":""}');

jsonObj.limit = hostname;

jsonObj.inventory = inventoryId;

var json = JSON.stringify(jsonObj);

var operationUrl = "/api/v2/job_templates/{jobId}/launch/";

operationUrl = operationUrl.replace("{jobId}", jobId);

var response = makeRequest("POST", operationUrl, json);

var jobId = response.id;

System.sleep(10*1000); //10s sleep

System.debug("Check Job Status...");

var operationUrl = "/api/v2/jobs/{jobId}/";

operationUrl = operationUrl.replace("{jobId}", jobId);

for(var i=0; i <= repNumber; i++){

var response = makeRequest("GET", operationUrl, null);

System.log("Job: " + jobName + ", Run iteration: " + i);

System.debug("JOB ID: " + jobId);

var status = response.status;

var failed = response.failed;

var jobExplanation = response.job_explanation;

var finished = response.finished;

System.debug("status: " + status);

System.debug("finished: " + finished);

System.debug("failed: " + failed);

System.debug("jobExplanation: " + jobExplanation);

if (finished){

System.debug("Job finishes with status: " + status);

if (true == failed){

throw "Ansible Job: " + jobName + " Failed, jobExplanation: " + jobExplanation

break;

}

else{

System.log("Ansible Job: " + jobName + " Status: " + status + ", jobExplanation: " + jobExplanation);

break;

}

}

else {

System.debug("Job in progress sleep for " + sleepTime + "s");

if (i == repNumber){

throw "Time out waiting for Ansible job to finish";

}

System.sleep(sleepTime * 1000);

}

}

function makeRequest(type, operationUrl, json){

var request = null;

if(json){

var request = restHost.createRequest(type, operationUrl, json);

}

else{

var request = restHost.createRequest(type, operationUrl);

}

request.setHeader("Content-Type", "application/json");

request.setHeader("Authorization", "Bearer " + token);

var response = request.execute();

if(response.statusCode > 399){

throw "Response: " + response.contentAsString;

}

return JSON.parse(response.contentAsString);

}

If you would like to run a job with additional inputs (so called extra_vars in Ansible). You will have to add few things.

First duplicate your “Run Ansible Job” to “Run Ansible Job with Inputs”. Add additional input to this workflow with name extraVars and type of String.

/***

Inputs:

extraVars <String> Ansible extra_var object

Example: {"input1":"M1234","input2":"Linux Server"}

***/

Now change the section where we build JSON object to be sent to Ansible tower now it will have additional section for extra_vars:

//build JSON

var extraVarsObj = JSON.parse(extraVars);

jsonObj = JSON.parse('{"inventory": 0,"limit":"","extra_vars":""}');

jsonObj.inventory = inventoryId;

jsonObj.limit = hostname;

jsonObj.extra_vars = extraVarsObj;

var json = JSON.stringify(jsonObj);

The last thing you have to do is to build an extra_var JSON object every time you are calling this “Run Ansible Job with Inputs” workflow. This depends on the configuration of an ansible job. Here is an example for a job with two inputs named input1 and input2

//Building extraVars object

var extraVars = {};

extraVars["input1"] = "value1";

extraVars["input2"] = "value2";

jobNameExtraVars = JSON.stringify(extraVars);

Last word of caution Ansible Tower have a concept of Jobs and Job Workflows. What you see above will run simple jobs. If you are interested in running Ansible Workflows drop me a mail via contact form, or maybe I’ll write something about this in the future. The difficulty with running an workflow lies in detecting failures but this is a topic for a different post.